I just found and fixed a major performance bottleneck in a web application I’ve been working on. The application consists of a single page populated with various dynamic graphs, the data for which is loaded via around 10 separate ajax requests which fire periodically to update the graphs and statistics.

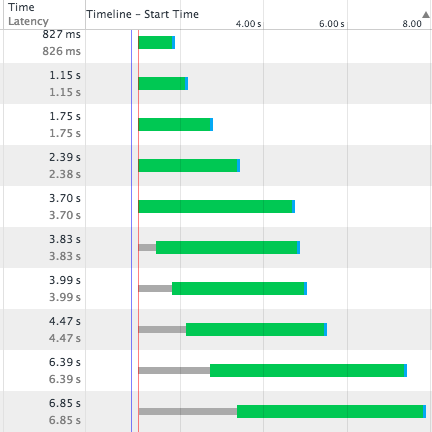

It appeared, watching the network tab in Google Chrome developer tools, that each AJAX request was queueing behind the previous one. There was a clear progression in the response graphs with latency getting longer with each request (The green bit in the cart below).

All the requests were fired at the same time but returned sequentially, no matter how much work was being done to build each response. Another symptom of the issue is that it seemed impossible to navigate away from the page, or refresh the page without waiting for the last pending ajax request to complete. Since one of the ajax responses took up to 10 seconds to return, it meant a significant delay in moving away from the page and when testing by refreshing the page. I amended the javascript side of the app to abort all ajax requests on beforeunload but it seemed to make little difference to the latency issue.

The answer was to be found buried in an unaccepted answer with only 1 upvote (until now) on StackOverflow. What was happening was the PHP session was being locked by the first AJAX request. The PHP side of the app wouldn’t even accept the subsequent AJAX requests until the first had completed and freed up the session. And this happens for every single request, forcing responses to come back in the same sequence in which they sent.

This also meant that navigating to another page on the same site, or refreshing the page, also had to wait. If I were navigating away from the site entirely it wouldn’t have been an issue, but because the new page I was now requesting utilised the same PHP session it had to wait until all pending requests to that session were complete. This problem would not be evident on a site that didn’t use sessions to maintain state.

The solution is to free up the session as soon as you can during the responding PHP script by using

session_write_close();

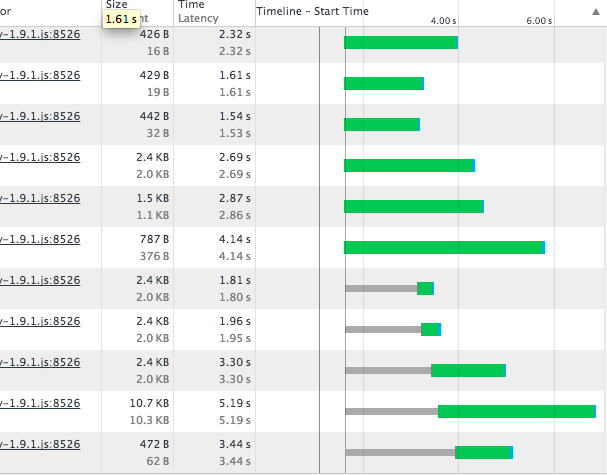

After adding this line my network responses looked like this:

You can see now that the limiting factor is the number of permitted concurrent connections. The latency for each request is now only dependent on the time taken to compile each response, and later requests complete before earlier, time-consuming, ones. This has also had the effect of taking two whole seconds off the apparent loading time of the page and navigation across site has dramatically increased in speed.

The graph above also points towards a further optimisation that could be made. The thin grey bars in the chart occur when the request is waiting for a free connection to the server. Note that the length of the grey bars at positions 7 & 8 correspond to the latency of requests 3 & 2 respectively. If 7 and 8 were moved up to fire first then later requests that are awaiting connection would have grey bars equivalent in length to the green portions of these requests taking nearly 2 seconds off the apparent page load time.